Testing Einstein

On this page:

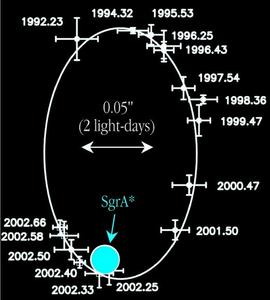

Motion of a star around the galactic center,

demonstrating that Sagittarius A* is a black hole

(adapted from Schödel et al, Nature, 17 Oct 2002)

An Unfinished Job

Einstein's theory of general relativity has passed every test that it has ever been put to. Nevertheless there are at least four good reasons to think that the theory is incomplete and will eventually need to be overthrown in just the same way that Newton's was. Firstly, general relativity predicts its own demise; it breaks down in singularities, regions where the curvature of spacetime becomes infinite and the field equations can no longer be applied. These cannot be dismissed as mere academic curiosities, because they do apparently occur in the real universe if general relativity holds. Theoretical work by Stephen Hawking and others has proven that singularities must form within a finite time (the universe is necessarily "geodesically incomplete"), given only very generic assumptions such as the positivity of energy. Two places where we expect to find them are at the big bang, and inside black holes like the one at the center of the Milky Way. If we are to fully understand these phenomena, then general relativity must be modified or extended in some way.

Secondly, there is the question of cosmology. Under the reasonable assumptions that the universe on large scales is homogeneous and isotropic (the same in all places and in all directions), as suggested by observation in combination with the Copernican principle, general relativity has led to the creation of a cosmological theory known as the big bang theory. This theory has had some spectacular successes; for instance, the prediction of the cosmic microwave background radiation, the calculation of the abundances of light elements, and a basis for understanding the origin of structure in the universe. It also has some weaknesses, notably involving finely tuned initial conditions (the "flatness" and "horizon problems").

Background galaxy (blue) being gravitationally

lensed by dark matter in foreground cluster

CL 0024+1654 (yellow) (Hubble Space Telescope image)

More troublingly, in recent decades it has become impossible to match the predictions of big-bang cosmology with observation unless the thin density of matter observed in the universe (i.e. that which can be seen by emission or absorption of light, or inferred from consistency with light-element synthesis) is supplemented by much larger amounts of unseen dark matter and dark energy that cannot consist of anything in the standard model of particle physics. The observations are quite clear: the required exotic dark matter has a density some five times that of standard-model matter, and the required dark energy has an energy density some three times greater still. To date, there is no direct experimental evidence for the existence of either component, and there are strong theoretical reasons (the "cosmological constant problem") to be suspicious of dark energy in particular. There is also no convincing explanation of why two new and as-yet unobserved forms of matter-energy should be so closely matched in energy density (the "coincidence problem"). While the majority of cosmologists seem prepared to accept both dark matter and dark energy as necessary, if inelegant facts of life, others are beginning to interpret them as possible evidence of a breakdown of general relativity at large distances and/or small accelerations.

Thirdly, existing tests of general relativity have been restricted to weak gravitational fields (or moderate ones in the case of the binary pulsar). Major surprises in this regime would have been surprising, since Einstein's theory goes over to Newton's in the weak-field limit, and we know that Newtonian gravity works reasonably well. But surprises are quite possible, and even likely, in the strong-field regime. The reason why is closely related to the fourth motivation for continuing to test Einstein's theory: general relativity as it stands is incompatible with the rest of physics (i.e. the "standard model" based on quantum field theory). The problem is only partly due to the fact that the gravitational field carries energy and thus "attracts itself"; this makes the theory nonlinear and more difficult, but not necessarily impossible to quantize. (Yang-Mills fields also possess self-couplings but are perfectly quantizable.) The deeper problem is not nonlinearity but nonrenormalizability, which is inherent in the physical dimensionality of gravity itself (i.e., in the fact that the gravitational field couples to mass rather than any other kind of "charge"). In field-theory language, quantization of gravity requires an infinite number of renormalization parameters. It is widely believed that our present theories of gravity and/or the other interactions are only approximate "effective field theories" that will eventually be seen as limiting cases of a unified theory in which all four forces become comparable in strength at very high energies. But there is no consensus as to whether it is general relativity or particle physics—or both—that must be modified, let alone how. Experimental input may be our only guide to unification, the last great remaining problem in theoretical physics.

The Equivalence Principle

Gravitational experiments can be divided into two kinds: those that test fundamental principles and those that test individual theories, including general relativity. The fundamental principles include such basic axioms as local position invariance (or LPI; the outcome of any experiment should be independent of where or when it is performed) and local Lorentz invariance (or LLI; the outcome of any experiment should be independent of the velocity of the freely-falling reference frame in which it is performed), which we will not discuss here. The fundamental principle of most direct physical relevance to general relativity is the equivalence principle, which asserts that gravitation is locally equivalent to acceleration. In practical terms this means that different falling bodies should follow the same trajectory in the same gravitational field, independent of their mass or internal structure, provided they are small enough not to disturb the environment or to be affected by tidal forces. To test this principle, one drops objects of different mass or composition in the same gravitational field and looks for differences in rate of fall. Such experiments have a long and fascinating history.

Stevin

The Greek philosopher Aristotle saw no need to do them at all; he knew by reason alone that a larger mass "must" fall more quickly than a light one, since it is the nature of earth-like elements to strive toward the center of the universe. Such was his authority that there are no records of anybody actually testing this prediction until nearly ten centuries later (6th century AD) when the Byzantine scholar John Philoponus wrote in a commentary on Aristotle: "If you let fall from the same height two weights of which one is many times as heavy as the other, you will see that the ratio of times required for the motion does not depend on the ratio of the weights, but that the difference in time is a very small one" (italics added). First to describe an actual experiment in the modern sense was Flemish engineer Simon Stevin (1548/9-1620), who wrote in 1586: My experience against Aristotle is the following. Let us take ... two spheres of lead, one ten times larger and heavier than the other, and drop them together from a height of 30 feet onto a board ... Then it will be found that the lighter will not be ten times longer on its way than the heavier, but that they will fall together onto the board so simultaneously that their two sounds seem to be as one" (italics added). Some years later Galileo Galilei (1564-1642) described a similar experiment involving a cannonball and a musket ball. Contrary to almost universal belief, he did not claim to have dropped these balls from the Leaning Tower of Pisa; that story comes from his last pupil and biographer and its authenticity is far from certain. What is certain is that Galileo understood the importance of this test better than any before him. He used a variety of materials including gold, lead, copper and stone, and improved the experiment by rolling his test masses down inclined tables (to dilute gravity) and eventually by using pendulums (to reduce friction). He concluded in the Discourses and Mathematical Demonstrations Concerning Two New Sciences (1638) that "If one could totally remove the resistance of the medium, all substances would fall at equal speeds".

Newton

Eötvös

Many people have improved on these tests since, most notably Isaac Newton (1643-1727) and Loránd Eötvös (1848-1919). Newton improved on Galileo's pendulum experiments, and perceived with characteristic brilliance that celestial bodies could also serve as test masses (in particular he checked that the earth and moon, as well as Jupiter and its satellites, fall toward the sun at the same rate). Newton's idea was reintroduced as a test of the equivalence principle by Kenneth Nordtvedt in the 1970s, and it now provides one of the two strongest limits on possible violations of equivalence: the earth (with a nickel-iron core) and the moon (composed mostly of silicates, like the earth's mantle) fall toward the sun with accelerations that differ by no more than 3 parts in 1013. This accuracy is made possible by lunar laser ranging measurements that make use of reflectors left on the moon's surface by the Apollo astronauts.

Eötvös' innovation was to introduce the use of the torsion balance, which enabled an improvement in sensitivity of six orders of magnitude over Newton's pendulum tests, reaching a precision of 5 parts in 109. Torsion balances are still the basis for the best terrestrial limits on violations of the equivalence principle today; the best such limits (by Eric Adelberger and his collaborators) are identical to those obtained from the celestial method, and limit any difference between the accelerations of different test masses to less than 3 parts in 1013. Other kinds of equivalence-principle experiments using laser atom interferometry to measure differences in rate of fall for isotopes of different atomic mass may reach even higher precision in the future. However, earthbound tests of the equivalence principle are subject to fundamental limitations imposed by seismic noise, tidal effects and systematic uncertainties in lunar modeling. It is likely that further significant increases in precision will require going into space.

One such experiment, the Satellite Test of the Equivalence Principle (STEP), is currently under development at Stanford University with a design sensitivity of one part in 1018, an improvement of more than five orders of magnitude over current limits. At this level, an equivalence-principle experiment is capable of testing not only general relativity, but also theories that go beyond it and attempt to unify gravity with the other forces of nature, including versions of quantum gravity, string theory and quintessence cosmology. STEP will inherit some of the key technologies that have been proven by Gravity Probe B, including drag-free control and a readout systen based on SQUIDs (Superconducting QUantum Interference Devices).

Gravitational Redshift

This was the first experimental test of gravitation that Einstein proposed, and it is often called one of the "three classical tests" of general relativity. The existence of the gravitational redshift effect, however, follows from the equivalence principle alone, so it is not a test of general relativity per se and is more properly grouped with the fundamental tests. (Some have called it the "half-test" in Einstein's "two and a half classical tests" of general relativity.) A clock in a gravitational field is, by the equivalence principle, indistinguishable from an identical one in an accelerated frame of reference. The gravitational redshift is thus equivalent to a Doppler shift between two accelerating frames. The first accurate measurement of this effect was made by R.V. Pound, G.A. Rebka and J.L. Snider in the 1960s using the frequency shift between two atomic "clocks" moving up and down inside Harvard University's Jefferson tower. They made use of a sensitive phenomenon called the Mössbauer effect to measure this shift to an accuracy of about 1%.

A similar accuracy has been reached by experiments comparing clocks on earth to those on spacecraft such as Voyager (in Saturn's gravitational field) or Galileo (in the field of the sun). Other experimenters have looked for the shift of spectral lines in the sun's gravitational field, an attempt that was confounded for many years by solar "limb effects". Oxygen triplet lines finally allowed a 2% detection by James LoPresto et al. in 1991. Another test compares terrestrial timepieces to the highly stable astronomical "clocks" known as pulsars; this yields accuracies of about 10%. The most precise gravitational redshift test to date was carried out by Robert Vessot and Martin Levine in 1976 and is known as Gravity Probe A. It compared a hydrogen maser clock on earth to an identical one lifted into orbit at about 10000 km, and confirmed theoretical expectations to an accuracy of 0.02%.

It is interesting to note that the Global Positioning System (GPS) system, while not intended or used as a test of general relativity, does effectively serve as confirmation of the gravitational redshift effect. To reach their specified (civilian) navigational accuracy of about 15 m, GPS satellites must coordinate their time signals to within about 50 nanoseconds, a precision nearly 1000 times smaller than the size of the gravitational redshift effect (almost 40 microseconds at their operating altitude of 20,000 km). If they did not take Einstein's theory into account, GPS trackers in aircraft cockpits would be off by kilometers within a day!

Mercury's Perihelion Shift

Mercury provided Einstein with his first true "classical" test of general relativity, and it formed the immediate basis for the rapid acceptance of his theory by his peers. Astronomers had known since the time of Urbain Le Verrier in 1859 that Mercury's orbit (as measured by the location of its perihelion, the planet's closest approach to the sun) was slewing too quickly around the sun to be explained by known factors such as the perturbing influence of the other planets. All explanations for this phenomenon (such as the notion that a new planet named "Vulcan" lay inside Mercury's orbit) had failed. When Einstein found that his theory explained the anomalous perihelion advance perfectly with no special assumptions, he experienced heart palpitations and was (as he wrote to a colleague) "for several days beside myself with joyous excitement".

Einstein in 1933

The perihelion shift test (and most other gravitational tests) are now usually expressed using a formalism invented by Arthur S. Eddington and later developed by Kenneth Nordtvedt Jr. and Clifford Will into what is known as the Parametrized Post-Newtonian (PPN) framework. Eddington described weak spherically symmetric gravitational fields like that around the sun in a rather general form with only two parameters, β describing the nonlinearity in time warping and γ describing space warping. (There is also a third parameter α, but it does not test any aspect of relativity theory and merely allows one to rescale the value of Newton's gravitational constant G.) General relativity predicts that β and γ are both equal to one, and most of the experimental tests effectively place upper limits on |β-1| and/or |γ-1|. Mercury's anomalous perihelion shift is proportional to (2+2γ-β)/3, which is equal to one in general relativity. This is the only "classic" test that probes the nonlinear nature of Einstein's theory. Initial measurements relied on optical telescopes; modern ones are based on radar data and constrain any departure from general relativity to less than 0.3%. An important early source of systematic error came from uncertainty in solar oblateness (quadrupole moment), but this has now been well constrained from helioseismology. Perihelion shift affects other planets besides Mercury, but is far smaller. It has also been observed using radio telescopes in distant binary pulsar systems, where it is known as periastron shift.

Light Deflection

If Mercury's perihelion shift led to the acceptance of general relativity among Einstein's peers, then light deflection made him famous with the public. He had already found in 1911 that the equivalence principle implies some light deflection, since a beam of light sent horizontally across a room will appear to bend toward the floor if the room is accelerating upwards. (Similar arguments had in fact been proposed on purely Newtonian grounds by Henry Cavendish in 1784 and Johann Georg von Soldner in 1803.) In 1915, however, Einstein realized that space curvature doubles the size of the effect, and that it might be possible to detect it by observing the bending of light from background stars around the sun during a solar eclipse. He had to wait until the end of the war, when expeditions led by Arthur Eddington and Andrew Crommelin returned decent photographs of the eclipse of May 1919.

Eddington

The results vindicated Einstein's new theory, though with an accuracy of only about 30%. When they were announced in November that year, the press picked up the story and Einstein became an international star. Many have speculated on the reasons for Einstein's mythic and seemingly endless appeal. Part of the explanation must lie in the end of the most shattering war in history; the fact that a German-speaking physicist's theory had been confirmed by English observers portended a peaceful future where pure thought might triumph over narrow minds. Abraham Pais, Einstein's great scientific biographer, put it like this in Subtle is the Lord... (1982): "A new man appears abruptly ... He carries the message of a new order in the universe. He is a new Moses come down from the mountain to bring the law, a new Joshua controlling the motion of heavenly bodies. He speaks in strange tongues but wise men aver that the stars testify to his veracity ... His mathematical language is sacred yet amenable to transcription into the profane: the fourth dimension ... light has weight, space is warped ... He fulfills two profound needs in man, the need to know and the need not to know but to believe."

The light deflection angle is proportional to (1+γ)/2, which is equal to one in general relativity. Experimental limits on γ using optical telescopes never managed to improve very much on those obtained by Eddington and Crommelin, and it was not until the late 1960s that radio astronomers were able to make significant progress by using linked arrays of radio telescopes (VLBI) to measure the bending of radio waves from distant quasars around the sun. By 1995 these observations had confirmed general relativity to an accuracy of 0.04%. The entire sky is slightly distorted by light deflection around the sun, and since this effect reaches 4 milliarcseconds perpendicular to the earth-sun direction it must be taken into account by modern astrometric satellites such as Hipparcos, which determine the positions of millions of stars to within 3 milliarcseconds. These corrections confirm general relativity indirectly at the 0.3% level. It has also been possible to measure light deflection by the planet Jupiter, though with an accuracy of only about 50%. In cosmology, light deflection (better known as gravitational lensing) is used routinely to weigh dark matter, measure the Hubble parameter and even function as a cosmic "magnifying glass" to bring the faintest and most distant objects into closer view.

Shapiro Time Delay

Animation of the Shapiro Time Delay

Physicist Kip Thorne describes the

Viking spacecraft measurement of the

Shapiro Time Delay

Shapiro

Until the early 1960s it seemed that general relativity had been tested experimentally in every way it could, and gravitational physicists were left to focus on mathematical aspects of the theory. A frustrated Richard Feynman wrote to his wife as follows from a conference in 1962: "I am not getting anything out of the meeting. I am learning nothing ... There is a great deal of 'activity in the field' these days, but this 'activity' is mainly in showing that the previous 'activity' of somebody else resulted in an error or in nothing useful ... It is like a lot of worms trying to get out of a bottle by crawling all over each other ... Remind me not to go to any more gravity meetings!" The space age changed all that. In 1964, Irwin Shapiro realized that if general relativity was correct, a light signal sent across the solar system past the sun to a planet or satellite would be slowed in the sun's gravitational field by an amount proportional to the light-bending factor, (1+γ)/2, and that it would be possible to measure this effect if the signal were reflected back to earth. Typical time delays are on the order of several hundred microseconds; this is sometimes referred to as the "fourth classical test" of general relativity. Passive radar reflections from Mercury and Mars were consistent with general relativity to an accuracy of about 5%. Use of the Viking Mars lander as an active radar retransmitter in 1976 confirmed Einstein's theory at the 0.1% level. Other targets included artifical satellites such as Mariners 6 and 7 and Voyager 2, but the most precise of all Shapiro time delay experiments involved Doppler tracking of the Cassini spacecraft on its way to Saturn in 2003; this limited any deviations from general relativity to less than 0.002% — the most stringent test of the theory so far.

The Binary Pulsar

Pulsars are rapidly rotating neutron stars which emit regular radio pulses as they rotate. As such they act as clocks which allows their orbital motions to be monitored very precisely. Tests based on these objects are particularly valuable because they allow us to probe gravitational fields stronger than those in our own solar system: not strong-field by any means, but arguably "moderate-field" tests. The first binary pulsar (a pulsar and another object in orbit around each other) was discovered in 1974 by Joseph Taylor and Russell Hulse during a routine search for new pulsars; it goes by the prosaic name B1913+16. The companion is also a compact object, likely a neutron star. As they orbit around each other, both stars are continuously accelerating, which causes them to emit gravitational radiation in the same way that an accelerated electric charge emits electromagnetic radiation. The emission of radiation leads to a loss of energy from the system, causing the two bodies to spiral toward each other and resulting in a gradual speeding up of the pulses from the pulsar. Precise timing measurements allow one to reconstruct three relativistic effects: the average rate of periastron shift, a combination of gravitational redshift and (special-relativistic) time dilation, and the rate of change of orbital period. Together these three pieces of information impose three constraints on the two unknown masses; the extra constraint can then be used to test the theoretical prediction for energy loss. If general relativity is assumed to be valid, then all three constraints are satisfied simultaneously to an accuracy of 0.2% or better. For this work Hulse and Taylor won the Nobel Prize in 1993.

Hulse

Taylor

Observations of the binary pulsar test do not constitute a direct detection of gravitational waves; we see only an energy loss that is consistent with the emission of gravitational radiation in precise agreement with Einstein's quadrupole formula (which, incidentally, he derived while bedridden with stomach ulcers in 1917). Nevertheless it is widely believed that gravitational waves do exist, and hoped that they will be directly detected eventually (see below). As of 2006, nine relativistic binary systems have been discovered with orbital periods of less than a day. Some, like B2127+11C, are virtual clones of B1913+16, while others show potential promise as further tests of Einstein's theory, like B1534+12 (whose orbital plane is seen almost edge-on) and J1141-6545 (in which the companion star is probably a white dwarf rather than a neutron star). Most fascinating is the recently discovered double pulsar system J0737-3039, in which radio pulses are detected from both stars, giving us so much information that the two masses are constrained by six constraints rather than three, allowing for four independent tests of general relativity. That all four of these tests are consistent with each other is itself impressive confirmation of the theory. After two and a half years of observation, the most precise of them (the Shapiro delay) verifies Einstein's theory to within 0.05%.

Gravitational Waves

Effect of a gravity wave

on a ring of particles

Direct detection of gravitational waves would verify one of general relativity's most striking predictions and open up a new astronomical window on the cosmos. There is good news and bad news about these waves. The good news is that they interact so weakly with matter that they can travel over vast distances without being scattered, potentially bringing us information from the most distant and most violent places in the universe. The bad news is that it is extremely difficult to get them to interact with a detector. Like electromagnetic waves (light), gravitational waves move at the speed of light and are "transverse": they cause test masses to accelerate at right angles to the direction of wave propagation, just as an electromagnetic wave does to test charges. A gravitational wave passing through your computer screen acts on a ring of free particles as shown in the diagram at left. In principle this is simple to detect; the particles behave as if they are being subjected to a strain exactly like a Newtonian tidal force. However, the motions involved are so tiny (the ring is "compressed" by at most 10-20) that detecting them is an immense challenge. Early detectors were large metal cylinders designed to respond to such a force resonantly, like a bell. One such detector, a 3100-pound aluminum cylinder built by Joseph Weber in 1963, led to a claimed detection of gravitational waves in 1969, but it could never be duplicated and is generally agreed to have been spurious. Four modern versions of the resonant or "Weber detector" are in operation as of 2006 (ALLEGRO in the U.S.A., AURIGA and NAUTILUS in Italy and EXPLORER at CERN).

February 11, 2016: Press Conference

video on the first detection of

gravitational waves by LIGO

Physicist Kip Thorne describes the LISA

mission to detect gravitational waves

The most sensitive detectors use interferometry to make precise distance measurements. The Laser Interferometry Gravity-wave Observatory (LIGO) consists of two L-shaped pairs of interferometers 4 km long, in which beams of laser light measure the difference between the lengths of the two "legs" induced by a gravitational wave. Expected mirror displacements are smaller than the size of an atomic nucleus, and can only be measured with careful suppression of seismic, thermal and shot noise effects. LIGO began operation in August 2002. No gravitational waves have yet been detected, but the experiment is placing useful upper limits on the frequency of possible sources such as exploding supernovae and collisions or coalescences of compact objects like neutron stars and black holes. LIGO was joined by another interferometric detector, GEO 600, in November 2005, and by a third, VIRGO, in May 2007. Other experiments are in various stages of construction around the world, including an upgraded version of LIGO (Advanced LIGO) with at least ten times the initial sensitivity. All these ground-based detectors are sensitive primarily to the high-frequency gravitational waves produced by transient phenomena (explosions, collisions, inspiraling binaries). A complementary Laser Interferometer Space Antenna (LISA) is currently in the planning stages; this will look for the lower-frequency waves from quasi-periodic sources like supermassive black-hole binaries in the final months of coalescence and compact star binaries long before coalescence. LISA is a triangular system of three satellites in solar orbit forming an interferometer with legs millions of km long. It will rely crucially on some of the technology (such as drag-free control) that has been proven by Gravity Probe B.

James Overduin, December 2007, Updated April 2016

<- Einstein's Spacetime (Previous) | Spacetime & Spin (Next) ->